So decision trees are here to tidy the dataset by looking at the values of the feature vector associated with each data point. In this example, after the splitting, the state seems tidier, most of the red rings have been put in Set 1 while a majority of blue crosses are in Set 2.

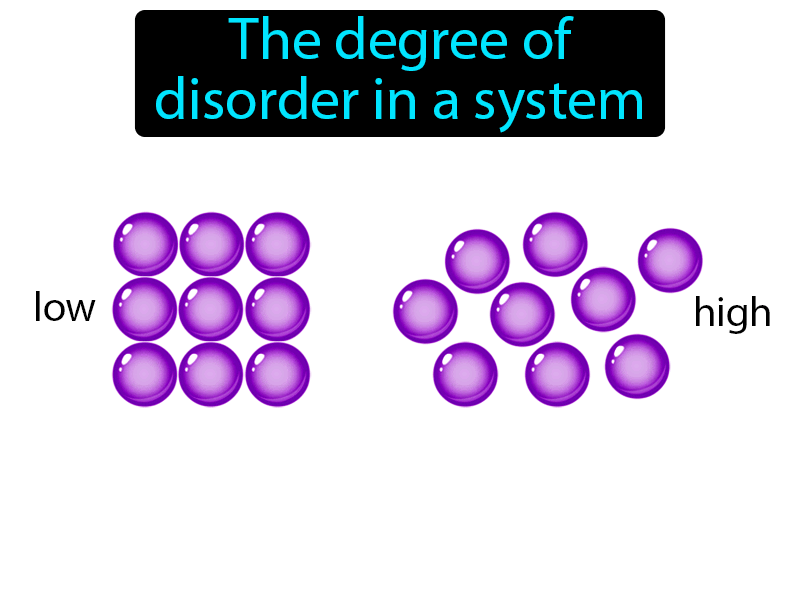

Based on their values, elements are put in Set 1 or Set 2. The decision starts by evaluating the feature values of the elements inside the initial set. Red rings and blue crosses symbolize elements with 2 different labels. On the figure below is depicted the splitting process. “To which group does this sample belongs to? Based on this arrangement of features, without doubt, it belongs to Group 1!” Of course, at the end of the tree, you want to have a clear answer. You maximize the purity of the groups as much as possible each time you create a new node of the tree (meaning you cut your set in two). You know their label since you construct the trees from the training set. You try to separate your data and group the samples together in the classes they belong to. In decision trees, the goal is to tidy the data. The requirement of this function is that it provides a minimum value if there is the same kind of objects in the set and a maximal value if there is a uniform mixing of objects with different labels (or categories) in the set. A mathematical function exists for estimating the mess among mathematical objects and we can apply it to our data. You know that objects must be on the shelves and probably grouped together, by type: books with books, toys with other toys …įortunately, the visual inspection can be replaced by a more mathematical approach for the data. (not everyone has the same measure :), but this is not the topic here). Usually, you use a subjective measure to estimate how messy is it. Imagine you are about to tidy your room or your kids’ room. So let us take this point of view and think that our dataset is like a messy room:Įntropy is an indicator of how messy your data is. I like the definition of entropy given sometimes by physicists: Let try to get some inspiration from him. Plato, with his cave, knew that metaphors are good ways for explaining deep ideas. Yet, its definition is not obvious for everyone.

You may have a look at Wikipedia to see the many uses of entropy. Metaphoric definition of entropyĮntropy is a concept used in Physics, mathematics, computer science (information theory) and other fields of science. This is really an important concept to get, in order to fully understand decision trees. Since I have spent quite some time studying the concept of entropy in academia, I will start my Machine Learning tutorial with it.Įvaluating the entropy is a key step in decision trees, however, it is often overlooked (as well as the other measures of the messiness of the data, like the Gini coefficient). In this series of blog posts, I want to clarify or at least provide a different explanation of some of the concepts in machine learning, in the hope of helping people increase their understanding of these methods. But I found these missing parts quite important to fully understand what’s going on in the algorithm. Sometimes, the emphasis is on the main part of the algorithm and some details are left missing. This concerns often details about the algorithms which are overlooked. However, from time to time, I find some particular points and explanations obscure. I got back on tracks quickly with the general idea of the mainstream algorithms. No doubts, the Internet is really useful, this is great. I have spent some time reading tutorials, blogs and Wikipedia. "hypothesis"), which predicts for $n$ classes $\$Įmpirical class probabilities are: $y'_0 = 3/5 = 0.I recently wanted to refresh my memory about Machine Learning methods. Namely, suppose that you have some fixed model (a.k.a. One way to interpret cross-entropy is to see it as a (minus) log-likelihood for the data $y_i'$, under a model $y_i$.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed